Google Announces Gemini 3 Flash: Frontier Intelligence Built for Real-World Speed

Google announces Gemini 3 Flash as a transformative leap in AI accessibility and performance. On December 17, 2025, Google launched a revolutionary AI model combining frontier-level reasoning with Flash-level speed and cost efficiency—marking a pivotal moment for developers, enterprises, and everyday users.

Unlike previous generations requiring trade-offs between intelligence and speed, Gemini 3 Flash delivers comprehensive reasoning capabilities while processing queries 3x faster than its predecessor at a fraction of the cost. This breakthrough addresses the fundamental challenge plaguing modern AI: expensive, slow responses that limit real-world adoption.

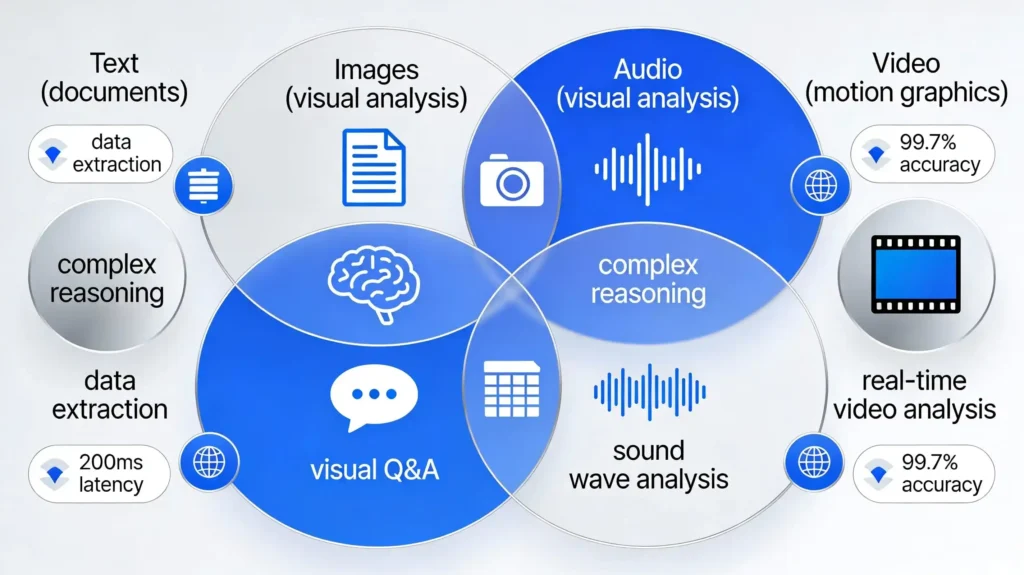

Furthermore, multimodal capabilities spanning text, images, audio, and video enable sophisticated applications previously restricted to specialized research models. The global rollout beginning immediately democratizes frontier AI technology, shifting competitive advantage from those with largest budgets to those with smartest engineering.

Key transformations reshaping AI landscape:

Pro-level reasoning meets Flash-level speed: Outperforms Gemini 2.5 Pro while executing 3x faster at dramatic cost reduction

Pricing revolution: Just $0.50 per million input tokens and $3 per million output tokens—approximately 90% cost savings

Multimodal mastery: Real-time video analysis, visual Q&A, data extraction, and complex reasoning across modalities

Immediate availability: Rolling out globally as default engine in Gemini App, AI Mode, and developer platforms

Agentic excellence: Superior performance on coding tasks, tool use, and autonomous workflows enabling next-generation applications

Breaking Performance Barriers: How Gemini 3 Flash Redefines AI Excellence

Gemini 3 Flash represents a watershed moment where high-performance AI becomes accessible to mainstream users and developers. Google’s announcement confirms that this model retains Gemini 3’s complex reasoning, multimodal vision understanding, and performance in agentic coding tasks—while adding Flash-series speed, efficiency, and cost advantages.

The distinction matters profoundly: frontier models previously demanded choosing between intelligence (expensive, slow) or speed (cheap, limited). Gemini 3 Flash obliterates this false choice. Additionally, early benchmarks demonstrate the model significantly outperforms Gemini 2.5 Pro across multiple dimensions, including areas where larger models typically dominate. This achievement validates Google’s engineering breakthrough—proving that intelligent model architecture and optimization matter more than brute-force parameter scaling.

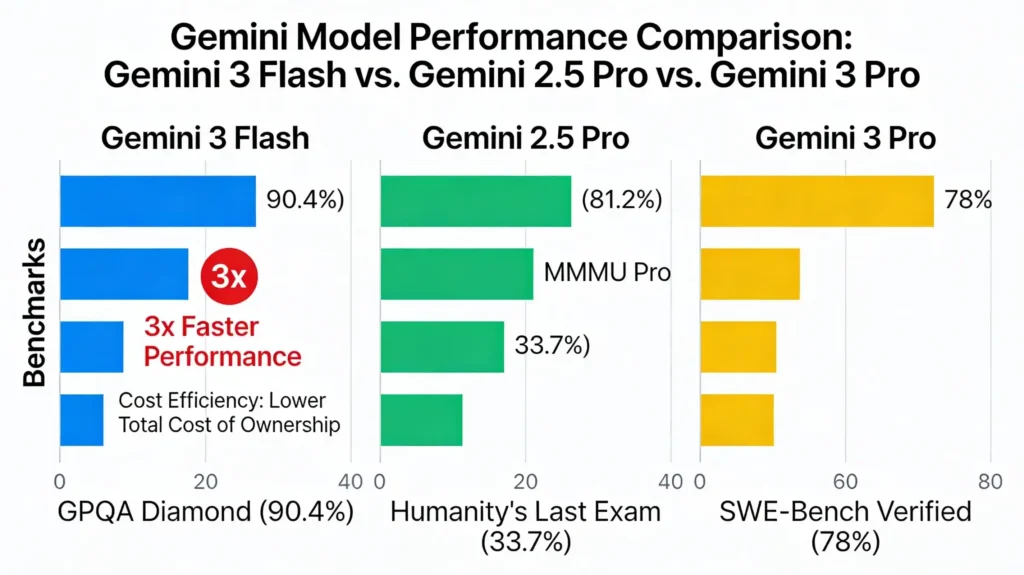

Benchmark Excellence: Competing at Frontier Level While Maintaining Flash Efficiency

The numbers tell a compelling story: Gemini 3 Flash achieves remarkable benchmark results demonstrating competitive performance with much larger models. GPQA Diamond (scientific knowledge) reaches 90.4%—placing the model in the same league as frontier reasoning models. Humanity’s Last Exam (academic reasoning) achieves 33.7% without tools, validating comprehensive knowledge and reasoning capabilities.

MMMU Pro (multimodal understanding and reasoning) scores 81.2%, matching Gemini 3 Pro performance and demonstrating state-of-the-art multimodal intelligence. Furthermore, SWE-Bench Verified (agentic coding) achieves 78%, proving exceptional performance for autonomous coding workflows. These aren’t marginal improvements—they represent quantum leaps enabling applications previously impossible with Flash-series models.

- Subscribe Now This product has multiple variants. The options may be chosen on the product page

- USD: $ 99.98

- Subscribe Now This product has multiple variants. The options may be chosen on the product page

- Subscribe Now This product has multiple variants. The options may be chosen on the product page

- Subscribe Now This product has multiple variants. The options may be chosen on the product page

- Select options This product has multiple variants. The options may be chosen on the product page

- USD: $ 749.98 - $ 4,916.65

- Subscribe Now This product has multiple variants. The options may be chosen on the product page

- Subscribe Now This product has multiple variants. The options may be chosen on the product page

- Subscribe Now This product has multiple variants. The options may be chosen on the product page

- USD: $ 6.65

Superior Performance in Specialized Domains

Beyond standard benchmarks, Gemini 3 Flash actually outperforms Gemini 3 Pro in specific domains. The model dominates Toolathlon (long-horizon real-world software tasks) and MPC Atlas (multi-step workflows using model context protocol). This specialization advantage matters enormously for developers building production systems where domain-specific performance drives commercial success.

Additionally, the model’s performance on agentic tasks positions it as ideal for autonomous AI assistants, customer support systems, and complex workflow orchestration. The engineering achievement reflects Google’s deep understanding of model optimization—delivering intelligence where it matters most while maintaining efficiency everywhere else.

MULTIMODAL EXCELLENCE AND PRACTICAL APPLICATIONS

Frontier Intelligence Across Every Modality: Text, Images, Audio & Video

Multimodal AI represents the future of human-computer interaction, yet most implementations sacrifice capability for speed. Gemini 3 Flash breaks this pattern by supporting sophisticated reasoning across text, images, audio, and video simultaneously. Google emphasizes the model’s ability to power responsive interactive experiences including real-time video analysis, visual question answering, and automated data extraction at scale.

This multimodal foundation enables developers to build customer support agents analyzing video tickets in real-time, in-game assistants understanding complex visual environments, and enterprise systems extracting insights from multimedia datasets. The implications for practical AI applications prove transformative—organizations can now deploy sophisticated AI assistants understanding user context comprehensively rather than processing isolated text queries.

Real-World Applications Unlocked by Multimodal Capabilities

Consider concrete use cases where Gemini 3 Flash’s multimodal excellence creates immediate business value. Customer support automation receives video tickets showing product issues, analyzes visual problems alongside written descriptions, and generates solutions combining both information streams. Real-time video analysis enables security systems understanding complex scenes, identifying anomalies, and escalating concerns intelligently. Visual data extraction processes documents, charts, and diagrams simultaneously, transforming unstructured business documents into actionable insights. Interactive creative tools empower non-technical users building prototypes through voice commands, sketches, and iterative feedback—democratizing software development. These applications generate immediate ROI through reduced operational costs, improved customer satisfaction, and accelerated product development cycles.

Efficiency Through Intelligent Computational Allocation

Google achieved this multimodal excellence through algorithmic innovation rather than raw computational power. The model dynamically adjusts reasoning effort based on task complexity—spending more computation on hard problems while remaining lightweight for routine queries. Additionally, Gemini 3 Flash uses approximately 30% fewer tokens than Gemini 2.5 Pro on typical workloads, translating directly to cost savings without performance sacrifice. This intelligent token allocation represents engineering sophistication: the model learns which problems deserve deep thinking versus quick pattern matching. Consequently, applications benefit from faster response times on routine queries while maintaining reasoning depth on complex challenges. This adaptive approach mirrors human cognition—applying focused attention where needed while operating efficiently elsewhere.

Google for Startups Accelerator: India – AI-First 2026 Cohort

Accelerating AI‑First Indian Startups + Google for Startups (Google LLC)

₹29,225,000.00- Prototype Stage, MVP Stage, Early Revenue Stage

- April 19, 2026

Google for Startups Accelerator: India – AI-First 2026 Cohort

Accelerating AI‑First Indian Startups + Google for Startups (Google LLC)

₹29,225,000.00- Prototype Stage, MVP Stage, Early Revenue Stage

- April 19, 2026

IHFC–ARTPARK–Siemens Healthineers MedTech Call for Proposals 2026

IHFC (I-Hub Foundation for Cobotics), ARTPARK, Siemens Healthineers

₹20,000,000.00- Prototype Stage, MVP Stage, Early Revenue Stage, Growth Stage

- April 30, 2026

PRICING, AVAILABILITY, AND COMPETITIVE IMPLICATIONS

Revolutionary Pricing and Global Availability Transform AI Economics

Pricing represents Gemini 3 Flash’s most disruptive characteristic. Input tokens cost just $0.50 per million while output tokens cost $3 per million—approximately 90% cost reduction compared to previous frontier models.

This pricing structure reflects Google’s commitment to democratizing AI technology and recapturing market share from competitors. To contextualize: businesses previously spending thousands monthly on AI infrastructure can now deliver equivalent or superior capabilities for hundreds. This economic shift removes budgetary barriers preventing smaller organizations from AI adoption.

Additionally, the 3x speed improvement reduces infrastructure costs further—fewer computational resources needed to serve equivalent throughput translates to operational cost reduction. Together, speed and pricing improvements fundamentally alter AI project economics.

Immediate Global Rollout Across Consumer and Developer Platforms

Gemini 3 Flash launches immediately as the default model in Gemini App and AI Mode in Google Search, delivering next-generation AI responses globally without additional charges. Developers and enterprises access the model via Gemini API in AI Studio, Vertex AI, Gemini CLI, Android Studio, and additional platforms.

The dual strategy captures both consumer and developer markets simultaneously—everyday users experience improved AI responses while builders deploy next-generation applications. Furthermore, Gemini 3 Flash appears as “Fast” option in model picker alongside “Thinking” mode for complex problems, empowering users to optimize speed-accuracy trade-offs. This accessibility-first approach contrasts sharply with competitors gatekeeping frontier models behind enterprise pricing.

| Feature Dimension | Gemini 2.5 Pro | Gemini 3 Pro | Gemini 3 Flash |

|---|---|---|---|

| Speed | Baseline | 1.5-2x faster | 3x faster |

| Input Token Cost | $3.50/1M | $3.50/1M | $0.50/1M |

| Output Token Cost | $10.50/1M | $10.50/1M | $3/1M |

| GPQA Diamond | <90% | 91% | 90.4% |

| MMMU Pro | <81% | 81% | 81.2% |

| SWE-Bench | <75% | 77% | 78% |

| Best For | Complex analysis | Advanced reasoning | Speed + reasoning |

Conclusion:

Google announces Gemini 3 Flash as the inflection point where frontier AI becomes mainstream reality. By delivering pro-level reasoning at Flash-series speed and pricing, Google shatters the performance-cost-speed triangle that previously limited AI adoption. Organizations no longer choose between intelligent AI (expensive, slow) and fast AI (cheap, limited)—Gemini 3 Flash delivers all three simultaneously.

The immediate global rollout, cost reduction, and multimodal capabilities enable organizations of every size deploying sophisticated AI assistants, automating complex workflows, and building next-generation applications. Moreover, the model’s excellence on agentic tasks positions autonomous AI as imminent reality rather than distant promise.

Developers should immediately integrate Gemini 3 Flash into production systems, replacing older models and recapturing infrastructure cost savings. The competitive landscape fundamentally shifts: whoever adopts Gemini 3 Flash fastest gains decisive advantage through better applications at dramatically lower cost. The future of AI democratization just arrived.

Frequently Asked Questions

Q1: How does Gemini 3 Flash compare to ChatGPT-4 and other competitors?

Gemini 3 Flash demonstrates performance competitive with frontier models while offering superior speed and cost efficiency. On benchmark scores like GPQA Diamond (90.4%), it competes directly with the largest models. Critically, Gemini 3 Flash delivers this performance at 90% lower cost and 3x faster speed—making direct comparison misleading. Organizations choosing between Gemini 3 Flash and competitors should prioritize total cost of ownership including latency, accuracy, and throughput rather than isolated benchmark scores.

Q2: Is Gemini 3 Flash suitable for production enterprise applications?

A: Absolutely. The model’s benchmark performance matches frontier models while offering superior speed and cost. Multimodal capabilities enable sophisticated applications from customer support automation to video analysis. Additionally, Gemini 3 Flash is available through Vertex AI—Google’s enterprise-grade AI platform providing SLA guarantees, compliance frameworks, and dedicated support. Organizations should confidently deploy Gemini 3 Flash for production workloads, particularly applications prioritizing speed and cost efficiency.

Q3: What’s the difference between “Fast” and “Thinking” modes in the model picker?

A: Fast mode optimizes for immediate response—suitable for routine questions requiring quick answers. Thinking mode allocates additional reasoning computation for complex problems demanding deep analysis. Users select based on query complexity: routine questions use Fast (cheaper, quicker), while challenging problems use Thinking (thorough, deliberate). This choice architecture empowers users optimizing speed-accuracy-cost trade-offs based on actual needs rather than forcing one-size-fits-all approaches.

Q4: How can developers access Gemini 3 Flash and integrate into existing applications?

Developers access Gemini 3 Flash through multiple platforms: AI Studio for experimentation, Vertex AI for production deployments, Gemini API for direct model access, Android Studio for mobile applications, and Gemini CLI for command-line integration. Most developers should start with AI Studio for rapid prototyping, then migrate proven applications to Vertex AI for enterprise-grade reliability and scaling.

Q5: What are the token efficiency improvements and why do they matter?

A: Gemini 3 Flash uses approximately 30% fewer tokens than Gemini 2.5 Pro on typical workloads, translating directly to cost savings and faster responses. Fewer tokens mean quicker processing, reduced infrastructure strain, and proportionally lower costs. For developers processing millions of queries, this efficiency improvement compounds into substantial cost reductions—potentially saving hundreds of thousands monthly at scale. Additionally, token efficiency enables deployment on resource-constrained environments like mobile devices and edge computing.

Referring Blog & Fact Sources

Explore these authoritative sources for comprehensive details and technical specifications:

9to5Google: Google Announces Gemini 3 Flash with Pro-Level Performance, Rolling Out Now – Complete technical breakdown of features, benchmarks, and API pricing

Indian Express: Google Launches Gemini 3 Flash, Promising Faster AI Reasoning at Lower Cost – Comprehensive analysis of performance benchmarks and practical implications

The Verge: Gemini 3 Flash Google AI Model Launch – Industry perspective and competitive analysis

Google Official Blog: Introducing Gemini 3 Flash – Official announcement with technical specifications and vision

Google Developers: Gemini API Documentation – Complete technical documentation for developer integration

Arshia Jahan

Digital Marketing and SEO professional, focused on content strategy & optimizing content, improving search rankings, and delivering results through smart, audience-focused strategies. As a Content Strategist and SEO professional, I believe that search engines don't buy products—people do. By blending technical SEO precision with a human-first content approach. I provide readers with the strategic blueprints needed to scale in a competitive digital world.