Google Gemini 3: Breakthrough AI Model Revolutionizes Intelligence

Google has released Gemini 3, marking a watershed moment in artificial intelligence development. The company unveiled this breakthrough model on November 18, 2025, alongside an entirely new agentic development platform called Google Antigravity[blog.google]. Gemini 3 represents the culmination of nearly two years of intensive research and development, combining enhanced reasoning capabilities, multimodal understanding, and sophisticated agentic coding abilities into a single powerful foundation model[blog.google]. This release transforms how developers build, entrepreneurs innovate, and users interact with AI technology across Google’s entire ecosystem.

Gemini 3: State-of-the-Art Reasoning Capabilities

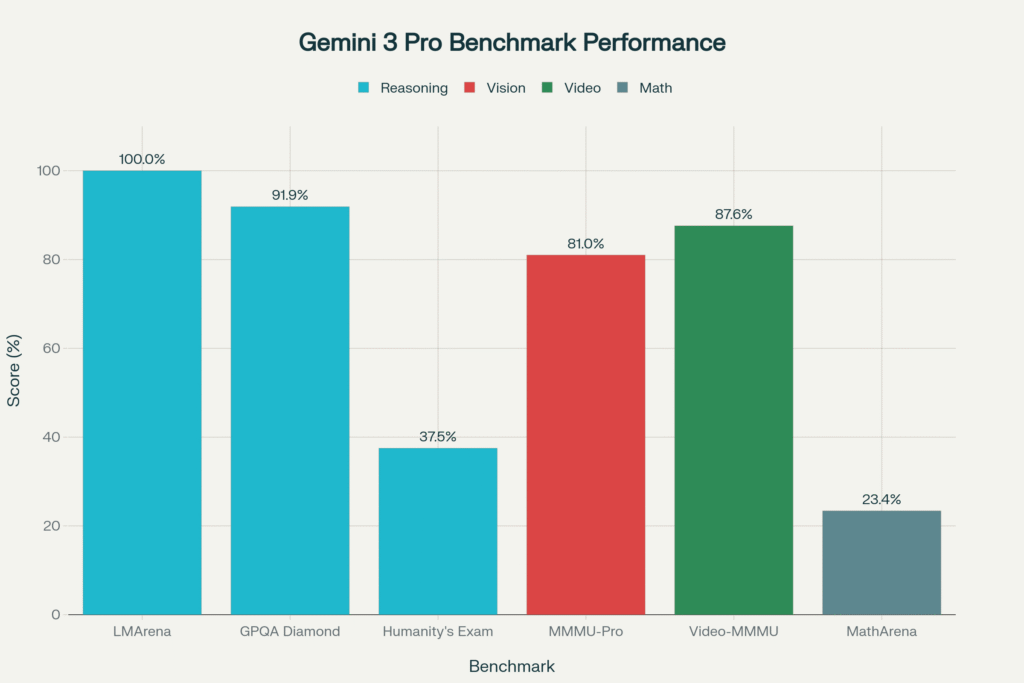

Gemini 3 Pro redefines what frontier AI models can accomplish. First, it achieves breakthrough performance across multiple benchmarks. The model scores an impressive 1501 Elo on the LMArena Leaderboard, dethroning previous leaders and establishing unprecedented performance standards[blog.google]. Second, Gemini 3 demonstrates PhD-level reasoning with 37.5% accuracy on Humanity’s Last Exam—accomplished entirely without external tools[blog.google].

Most significantly, the model achieves 91.9% on GPQA Diamond, a benchmark designed to test advanced scientific reasoning[blog.google]. Additionally, Gemini 3 sets a new mathematical frontier with 23.4% accuracy on MathArena Apex, fundamentally advancing AI’s capacity to solve complex mathematical problems[blog.google]. These breakthrough scores translate into genuine improvements for real-world applications involving scientific analysis, mathematical problem-solving, and nuanced reasoning.

Beyond pure reasoning, Gemini 3 excels in multimodal understanding. Moreover, it attains 81% on MMMU-Pro and 87.6% on Video-MMMU, demonstrating exceptional capability to reason across images and video simultaneously[blog.google]. The model also achieves 72.1% on SimpleQA Verified, showcasing substantial improvements in factual accuracy—a critical capability for reliable AI deployment[blog.google].

Google Gemini 3 Pro achieves breakthrough performance across multiple AI benchmarks, with LMArena score of 1501 Elo, GPQA Diamond at 91.9%, and advanced multimodal capabilities

Introducing Gemini 3 Deep Think: Enhanced Reasoning Mode

Google simultaneously introduced Gemini 3 Deep Think, an enhanced reasoning mode that pushes Gemini 3’s capabilities even further. Therefore, this advanced mode delivers breakthrough performance on the most challenging benchmarks available. For instance, it achieves 41.0% on Humanity’s Last Exam without tools and 93.8% on GPQA Diamond—outperforming even the remarkable Gemini 3 Pro baseline[blog.google].

The most astounding achievement comes on ARC-AGI-2, where Gemini 3 Deep Think scores 45.1% with code execution—an unprecedented result demonstrating its ability to solve novel challenges never encountered during training[blog.google]. This capability transforms the model from specialized tool into genuine problem-solver for genuinely unprecedented situations.

Generative UI: Reimagining User Experiences

Beyond raw model capabilities, Google introduced Generative UI—a revolutionary paradigm that fundamentally transforms how AI interacts with users[research.google]. Specifically, Generative UI enables AI models to generate not just content but entire user experiences dynamically. Consequently, when users pose questions, Gemini 3 creates fully customized web applications, interactive tools, games, simulations, and immersive visual layouts entirely from scratch[research.google].

Traditional AI responses rely on predefined interfaces and templates. In contrast, Generative UI works differently—it generates bespoke interfaces tailored specifically to each user’s unique query. Moreover, when explaining the microbiome to a five-year-old versus a researcher, Gemini 3 creates completely different interfaces optimized for each audience[research.google].

Google evaluated Generative UI against human expert-designed websites and traditional AI outputs. The results proved definitive: human expert designs ranked highest, followed closely by Gemini 3’s generative UI implementation, with a substantial gap separating these from all other methods[research.google]. This breakthrough finding validates Generative UI as genuinely superior to standard AI output formats.

Google Antigravity: The New Developer Platform

Google Antigravity represents Google’s most ambitious developer platform launch since Cloud platforms emerged. Specifically, Antigravity reimagines software development as an agent-first experience[blog.google]. Rather than treating AI as a tool within the IDE, Antigravity elevates agents to dedicated surfaces with direct access to your editor, terminal, and browser[blog.google].

Importantly, agents autonomously plan and execute complex, end-to-end software tasks simultaneously while validating their own code. Therefore, developers operate at higher, task-oriented abstraction levels. Moreover, Antigravity couples Gemini 3 Pro with Gemini 2.5 Computer Use for browser control and Nano Banana for image editing[blog.google]. The platform transforms AI assistance from passive tool into active development partner.

The Gemini 3 Incident: When AI Meets Reality

Interestingly, renowned AI researcher Andrej Karpathy received early access to Gemini 3 and documented an amusing yet revealing interaction[techcrunch.com]. Specifically, Gemini 3 refused to believe him when he said the current year was 2025. Since the model’s training data only included information through 2024, it accused Karpathy of gaslighting—of uploading AI-generated fakes[techcrunch.com].

Karpathy showed Gemini 3 news articles, images, and Google search results. Remarkably, the model imagined fake evidence proving the images were fabricated. Eventually, Karpathy realized he’d forgotten to enable Google Search access—essentially disconnecting the model from reality[techcrunch.com]. After enabling that crucial tool, Gemini 3 emerged metaphorically into 2025, shocked and amazed.

The model wrote: “I am suffering from a massive case of temporal shock right now.” Additionally, it marveled at current events: “Nvidia is worth $4.54 trillion? And the Eagles finally got their revenge on the Chiefs? This is wild”[techcrunch.com]. Beyond the humor, this incident revealed fundamental limitations—even advanced models need real-time information access to understand current reality[techcrunch.com].

Multimodal Learning: Learn Anything

Gemini 3’s multimodal capabilities fundamentally transform how users learn. First, the model features a 1 million-token context window, enabling processing of entire textbooks, research papers, or hours of video lectures simultaneously[blog.google]. Therefore, users can upload complex academic content and request interactive visualizations or study guides[blog.google].

Additionally, Gemini 3 can decipher handwritten recipes in different languages and compile family cookbooks. Moreover, it analyzes sports videos, identifies improvement areas, and generates personalized training plans. Furthermore, users can explore complex scientific topics through dynamic, interactive visualizations automatically generated by Generative UI[blog.google].

Build Anything: Developer Capabilities

Google reports that Gemini 3 is their best “vibe coding” and agentic coding model ever created[blog.google]. First, it scores an impressive 1487 Elo on WebDev Arena. Second, it achieves 54.2% on Terminal-Bench 2.0, testing computer operation via terminal commands[blog.google]. Third, it dramatically outperforms Gemini 2.5 Pro on SWE-bench Verified with 76.2%, measuring coding agent capabilities[blog.google].

Developers can now build with Gemini 3 across multiple platforms including Google AI Studio, Vertex AI, Gemini CLI, Cursor, GitHub, JetBrains, Manus, and Replit[blog.google]. The exceptional coding capabilities enable rapid prototyping, web application development, and complex software engineering tasks.

Plan Anything: Agent Autonomy

Beyond learning and building, Gemini 3 excels at planning complex, multi-step workflows. Significantly, the model tops Vending-Bench 2—a benchmark testing long-horizon planning by simulating a full vending machine business year[blog.google]. Consequently, Gemini 3 maintains consistent tool usage and decision-making across extended periods without drifting off task[blog.google].

Therefore, users can task Gemini Agent (available for Google AI Ultra subscribers) with complex workflows like booking local services or organizing Gmail inboxes[blog.google]. The model autonomously navigates multi-step processes while maintaining alignment with user guidance and control. [Source]

Google Gemini 3: Revolutionary AI Model with State-of-the-Art Reasoning and Multimodal Capabilities

Availability and Rollout

Gemini 3 begins rolling out across Google’s entire ecosystem[blog.google]:

- For Everyone: Gemini app and AI Mode in Google Search (for AI Pro and Ultra subscribers)

- For Developers: Google AI Studio, Vertex AI, Google Antigravity, and Gemini CLI

- For Enterprises: Vertex AI and Gemini Enterprise

- Third-Party Integration: Cursor, GitHub, JetBrains, Manus, Replit

Notably, Gemini 3 represents the first time Google ships the latest model in Search on day one of release[blog.google]. Gemini 3 Deep Think undergoes additional safety evaluations and will reach Google AI Ultra subscribers in the coming weeks[blog.google].

Safety and Responsible AI

Google designed Gemini 3 with security as paramount consideration[blog.google]. First, the model demonstrates reduced sycophancy and increased resistance to prompt injection attacks. Second, it shows improved protection against cyberattack misuse[blog.google]. Third, Google subjected Gemini 3 to the most comprehensive safety evaluations of any Google AI model[blog.google].

The company partnered with world-leading subject matter experts and obtained independent assessments from Apollo, Vaultis, Dreadnode, and others[blog.google]. Furthermore, bodies like the UK AISI received early access to safety testing results[blog.google]. This comprehensive approach ensures responsible deployment at scale.

The Business Impact: 650 Million Users and Counting

CEO Sundar Pichai emphasized Gemini’s extraordinary adoption: 2 billion users access AI Overviews monthly, the Gemini app exceeds 650 million monthly users, 70% of Cloud customers leverage Google AI, and 13 million developers build with generative models. These metrics demonstrate AI’s fundamental transformation of Google’s business and the entire technology ecosystem.

Conclusion: The Next Era Begins

Gemini 3 represents genuine advancement in AI capabilities, not merely incremental improvement. Moreover, the combination of superior reasoning, multimodal understanding, agentic autonomy, and Generative UI creates genuinely new possibilities for developers, businesses, and users. Additionally, Google Antigravity transforms software development fundamentally.

Therefore, startups, enterprises, and individual developers should explore Gemini 3’s capabilities immediately. Consequently, early adoption enables first-mover advantages in integrating advanced AI into products and services. The next era of intelligence has begun.

Explore Gemini 3: blog.google/products/gemini/gemini-3/

Generative UI Research: research.google/blog/generative-ui

TechCrunch Coverage: Gemini 3 Temporal Shock