Top and Best Prompts for AI: Unlocking AI Potential with Expert Techniques

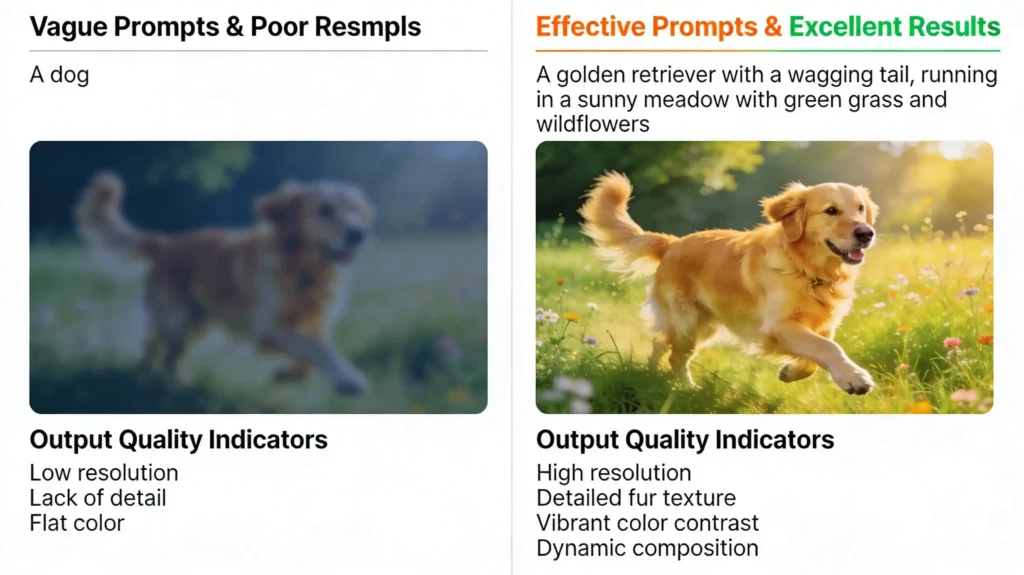

Top and best prompts for AI represent the critical skill differentiating exceptional AI users from mediocre ones. Most professionals struggle with AI outputs because they lack structured prompting frameworks rather than because AI itself lacks capability.

When you craft intentional, well-designed prompts, you transform vague AI responses into precise, actionable insights. Research demonstrates that perfect prompt design improves successful answer rates from 85% to 98%—a remarkable 13-point improvement from technique alone.

Furthermore, mastering prompt engineering enhances customer experience, reduces AI hallucination, and prevents harmful outputs. Whether you’re leveraging ChatGPT, Bard, or specialized AI models, understanding prompt architecture multiplies your AI investment value exponentially.

Essential insights you’ll master:

Learn 10 proven prompting techniques increasing AI accuracy from 85% to 98%

Discover the anatomy of professional prompts with specific, actionable components

Master chain-of-thought reasoning, few-shot examples, and output formatting

Implement edge case handling preventing AI hallucinations and off-topic responses

Apply multiprompt approaches for complex applications and advanced use cases

FOUNDATIONAL PROMPTING TECHNIQUES FOR SUPERIOR RESULTS

Master the 10 Core Prompting Approaches Transforming AI Performance

Effective prompting begins with understanding fundamental techniques that separate exceptional outputs from mediocre responses. These methodologies, derived from extensive analysis of 1000+ prompts, create the foundation for professional AI applications. Each technique builds upon previous ones, creating a cumulative effect that dramatically improves output quality.

Additionally, these approaches work across platforms—from ChatGPT playgrounds to API integrations using OpenAI, Google Cloud, or other providers. Understanding when and how to apply each technique transforms your AI strategy from trial-and-error to systematic excellence.

Technique #1: Add Specific, Descriptive Instructions

Generic instructions produce generic, lengthy, unfocused responses that waste your time. Instead, specificity drives precision.

Rather than requesting “answer the question” try providing detailed behavioral expectations. For example, saying “Act as a senior product strategist with 15 years enterprise experience” creates personality, expertise, and contextual understanding—all accomplished through a single descriptive instruction.

Specific instructions should include: role/persona definition, communication style preferences, expertise level expectations, and scope boundaries. Additionally, avoid redundant superfluous phrases (“Answer truthfully, on the basis of your knowledge”) because modern AI models already exhibit these behaviors by default. Your instructions should focus exclusively on requirements that differentiate your desired output from default behavior.

Technique #2: Define Precise Output Formats

Unstructured output requires manual parsing, wasting valuable time. Instead, specify desired formats exactly—whether JSON, XML, markdown tables, bullet points, or custom structures. This approach proves especially critical when integrating AI with software applications requiring automated response parsing.

For instance, requesting “Return the response as a valid JSON object with fields: name, description, relevance_score, recommendation” immediately produces machine-readable output requiring no manual reformatting. Furthermore, structured outputs reduce hallucination by forcing models to organize thoughts systematically.

Even without formal formatting, sketching output structure guides the model toward your desired response architecture. This simple technique eliminates frustrating manual reformatting steps while improving consistency.

Technique #3: Provide Few-Shot Examples Demonstrating Expected Behavior

While foundation models excel at zero-shot learning (responding without examples), complex tasks benefit tremendously from few-shot examples. Provide 2-5 concrete query-response pairs demonstrating exactly how you want questions answered.

These examples should: match your desired output format precisely, cover diverse question types, demonstrate appropriate response length and tone, and include edge cases where possible. Importantly, examples should remain unparaphrased—use exact format you expect in actual deployment. This approach essentially trains the model through demonstration rather than instruction alone, producing significantly better alignment with your needs.

ADVANCED PROMPTING STRATEGIES FOR COMPLEX APPLICATIONS

Elevate Your AI Game: Chain-of-Thought, RAG, and Professional Prompt Architecture

Advanced prompting techniques separate professional AI applications from simple chatbot interactions. These methodologies handle complex reasoning, incorporate real-time data, and structure prompts for production-grade reliability.

According to MIT Sloan EdTech research on effective prompts, structured prompting dramatically improves AI reasoning capacity and output reliability. Furthermore, combining multiple techniques creates compound effects far exceeding individual technique impact. Professional applications demand this systematic approach to maintain consistency, accuracy, and user satisfaction.

Technique #4: Implement Chain-of-Thought Reasoning for Complex Problems

Language models often fail at complex reasoning because they generate answers stochastically—producing tokens based on probability patterns rather than systematic thinking. Chain-of-thought prompting forces models to show their work, explaining each reasoning step before providing final answers.

Rather than asking “What species benefits from both flying and swimming?“, request “Please explain your reasoning step-by-step before answering: What species benefits from both flying and swimming?” This approach maps to Daniel Kahneman’s “System 2 thinking“—slow, deliberate, systematic reasoning instead of fast intuitive responses.

Results demonstrate that chain-of-thought reasoning dramatically improves accuracy on complex logic problems, mathematical reasoning, and multi-step analysis. Include output format guidance (e.g., “Show reasoning, then end with: Final Answer: [species name]”) enabling you to extract final answers programmatically.

Technique #5: Deploy Retrieval-Augmented Generation (RAG) for Accurate, Current Information

Relying on a model’s pre-training data produces outdated, inaccurate, or incomplete responses when company-specific information is needed. Retrieval-Augmented Generation (RAG) injects custom organizational content into prompts before the model generates responses.

This approach combines retrieval systems with generation—first retrieving relevant snippets from your proprietary content, then asking the model to answer using that specific context. Subsequently, the model generates responses grounded in your actual data rather than general internet knowledge.

Implement RAG when building customer service chatbots, product support systems, or specialized domain applications requiring accuracy guarantees. Additionally, RAG solves hallucination problems by constraining the model to documented organizational knowledge.

Blockchain India Challenge – Get Up to ₹50 Lakh

Ministry of Electronics and Information Technology (MeitY), Government of India (implemented by Centre for Development of Advanced Computing – C-DAC)

₹6,550,000.00- Idea Stage, Prototype Stage, MVP Stage

- March 27, 2026

Blockchain India Challenge – Get Up to ₹50 Lakh

Ministry of Electronics and Information Technology (MeitY), Government of India (implemented by Centre for Development of Advanced Computing – C-DAC)

₹6,550,000.00- Idea Stage, Prototype Stage, MVP Stage

- March 27, 2026

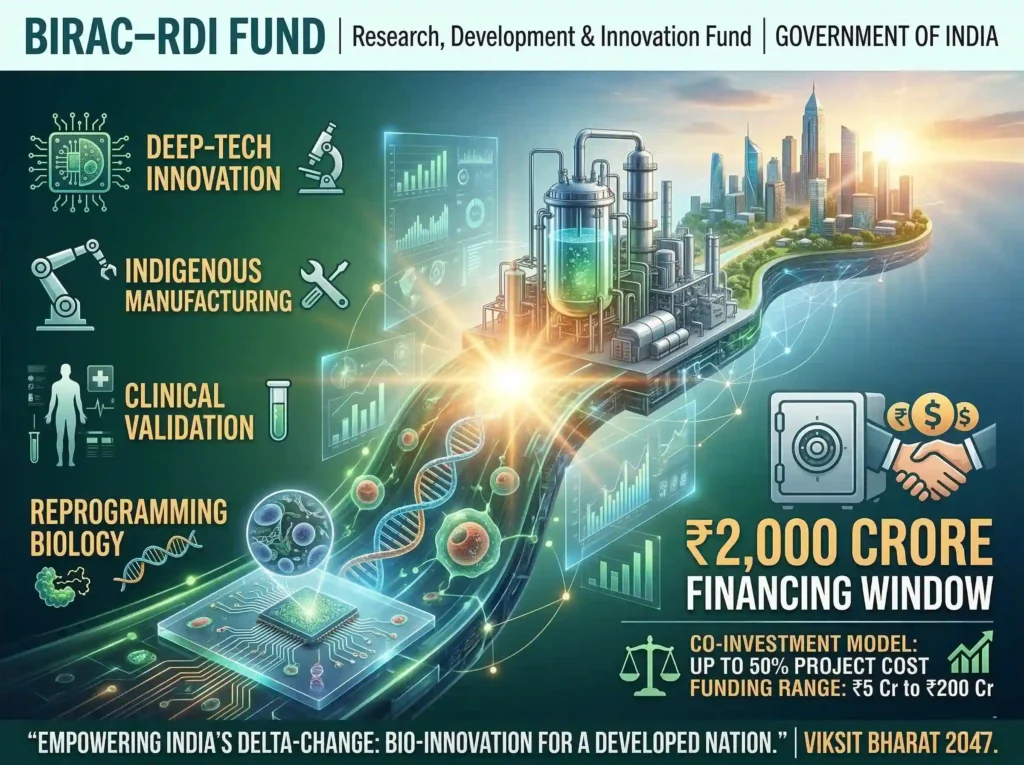

BIRAC–RDI Fund – Research, Development and Innovation Fund

Delta Change Challenge for Biotech Innovation – Biotechnology Industry Research Assistance Council (BIRAC), under Department of Biotechnology (DBT)

₹2,000,000,000.00- MVP Stage, Early Revenue Stage, Growth Stage

- March 31, 2026

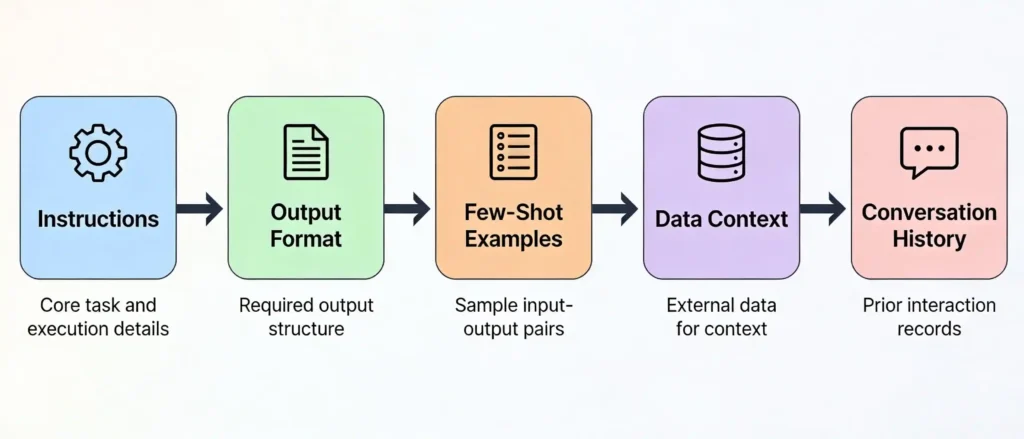

Technique #6: Design Professional Prompt Architecture with Structured Components

Professional prompts resemble small applications with distinct, clearly separated components. Structure your prompts using clear headings, delimiters, and logical organization: #INSTRUCTIONS (what the model should do), **#OUTPUTFORMAT (desired response structure), #EXAMPLES (few-shot demonstrations), **#DATAONTEXT (relevant information), **#CONVERSATION_HISTORY prior messages for continuity). This approach proves especially valuable in complex applications where models must navigate multiple scenarios, requirements, and data sources.

Additionally, clear formatting helps current and future prompt engineers understand your logic, facilitating maintenance and iteration. Furthermore, structured prompts with explicit components significantly reduce prompt injection attacks—malicious user inputs attempting to redirect model behavior.

| Prompting Technique | Best For | Complexity Level | Accuracy Improvement |

|---|---|---|---|

| Specific Instructions | Behavioral guidance | Low | 5-10% |

| Output Formatting | Structured responses | Low | 10-15% |

| Few-Shot Examples | Task clarification | Medium | 15-25% |

| Chain-of-Thought | Complex reasoning | Medium | 20-30% |

| RAG Integration | Accuracy with data | High | 15-35% |

| Edge Case Handling | Reliability | Medium | 10-20% |

SPECIALIZED PROMPTING AND MULTIPROMPT ARCHITECTURES

Specialized Techniques: Edge Cases, Conversation Context, and Scaling Solutions

Robust AI applications require handling edge cases, maintaining conversation context, and managing complex multi-step workflows. These advanced techniques transform AI from unreliable novelty into dependable production systems.

Understanding when to apply each technique—and when to combine them—separates expert prompt engineers from beginners. Furthermore, recognizing application limitations prevents expensive, embarrassing failures. Various prompting frameworks demonstrate these principles across diverse domains from creative content generation to technical support.

Technique #7: Handle Edge Cases and Off-Topic Questions Preventing Hallucination

Production AI systems must gracefully handle questions they cannot answer. Include edge-case examples in your few-shot demonstrations showing the model how to respond to off-topic questions, harmful requests, or scenarios requiring “I don’t know” responses.

For example, train your cleaning robot support chatbot with examples showing: how to handle standard operation questions, how to recognize off-topic questions, and appropriate “I don’t know, please contact support” responses for questions beyond scope. Without explicit edge-case training, models hallucinate plausible-sounding but potentially harmful answers.

This technique proves absolutely critical for business applications where incorrect information creates liability risk. Additionally, edge-case handling maintains user trust—users appreciate honest “I don’t know” responses more than confident misinformation.

Technique #8: Incorporate Conversation History for Contextual Continuity

Single-turn prompts fail when users reference previous conversation topics. Include recent conversation history in your prompt, enabling the model to understand context from prior exchanges.

Example scenario: User asks “Where can I buy wool socks nearby?” → Assistant recommends Sockenparadies. User asks “Are they still open?” → Without conversation history, this question is ambiguous. With history included, the model understands “they” refers to the specific shop previously recommended, enabling accurate response.

Conversation history proves essential for customer support systems, personal assistants, and interactive applications where users naturally build upon previous exchanges. Most modern AI APIs (ChatGPT API, Langchain) handle conversation history automatically—ensure your prompting strategy leverages these features.

Technique #9 & #10: Implement Multiprompt Architectures for Complex Applications

Single monolithic prompts struggle with applications serving multiple distinct purposes simultaneously. The multiprompt approach first classifies user utterances into categories, then routes to specialized prompts optimized for each category.

Example: An in-car AI system might classify user inputs as: navigation requests, feature questions, hands-free calling, emergency assistance, or casual conversation. Each category routes to purpose-built prompts with tailored examples, output formats, and constraints.

Benefits include: improved accuracy through specialization, reduced token costs through prompt reduction, clearer reasoning paths preventing confusion, and easier maintenance through modular architecture. Additionally, multiprompt systems scale more effectively—adding new categories simply requires new specialized prompts rather than expanding a single, increasingly complex prompt.

For complex applications, multiprompt architecture transforms from optional luxury to essential design pattern.

Conclusion:

Top and best prompts for AI represent the primary lever differentiating AI experts from novices. By systematically applying these 10 proven techniques

Specific instructions, output formatting, few-shot examples, edge-case handling, chain-of-thought reasoning, prompt templates, RAG integration, conversation history, clear formatting, and multiprompt architectures—you dramatically elevate AI output quality.

These techniques aren’t theoretical abstractions; they’re battle-tested approaches increasing success rates from 85% to 98%, a transformative 13-point improvement. Start implementing one technique immediately: simply adding specific, descriptive instructions to your next prompt. Subsequently, progressively master additional techniques as applications grow more complex.

Remember that prompt engineering remains art combined with science—experimentation, iteration, and refinement continue improving results. The investment in prompting mastery multiplies across every AI interaction, every application, and every team member leveraging AI tools. Begin today, and watch how dramatically your AI effectiveness improves.

Frequently Asked Questions

Q1: What’s the difference between top prompts and best prompts?

A: “Top prompts” typically refers to popular, frequently-used prompts that work well for common tasks—like creative writing, summarization, or brainstorming. “Best prompts” implies prompts optimized for specific outcomes, incorporating research-backed techniques and achieving superior results. A prompt can be popular without being optimized; conversely, a perfectly engineered prompt might be less well-known. Your goal should be pursuing “best prompts” for your specific use case rather than simply copying trending “top prompts” from social media.

Q2: How much longer do well-structured prompts need to be compared to simple requests?

A: Professional prompts average 200-500 tokens depending on application complexity—roughly 4-10x longer than casual requests. However, token cost isn’t wasted; strategic prompt investment prevents expensive reprocessing of poor outputs. Consider the ROI: spending 300 extra tokens on prompt structuring frequently prevents 1000+ tokens spent regenerating inadequate responses. Prioritize getting results right on first attempt through thoughtful prompting rather than optimizing token count at the expense of quality.

Q3: Can I use the same prompt across different AI models or platforms?

A: Partially. Core prompting principles (specific instructions, output formatting, examples) transfer across platforms, but models differ in capability and behavior. A prompt perfected for ChatGPT-4 might perform differently on Claude, Gemini, or specialized domain models. Additionally, model updates can significantly impact prompt performance. Recommendation: test critical prompts across your target platforms, then iteratively refine based on actual performance differences. Build prompt templates accommodating model-specific variations rather than writing completely separate prompts.

Q4: How do I know if my prompt is effective or if I need to modify it?

A: Establish clear success metrics before prompting: response accuracy, relevance, format compliance, tone appropriateness, and absence of hallucinations. Compare actual results against these criteria. Additionally, track consistency—do you get similar quality across multiple calls or do results vary wildly? High variance suggests vague prompting. A/B test prompt variations, measuring performance differences objectively. Collect user feedback identifying common complaints or unmet expectations. Iterate based on data, not intuition—rigorous measurement drives prompt improvement.

Q5: Should I invest time learning prompt engineering if I’m not building applications?

A: Absolutely. Even casual users benefit tremendously from prompting mastery. Spending 2-3 minutes crafting a structured prompt to ChatGPT produces dramatically superior results compared to quick throwaway questions. Better results on personal projects, work assignments, and creative endeavors justify the small investment. Furthermore, prompting skills transfer across all AI applications—image generation, coding assistants, research tools—creating universal value. Treat prompt engineering as essential digital literacy for the AI era, similar to how email proficiency became essential in previous decades.

Referring Blog & Fact Sources

Explore these authoritative resources for deeper prompting expertise and advanced techniques:

Medium: I Scanned 1000+ Prompts: 10 Need-to-Know Techniques – Comprehensive analysis of 1000+ prompts revealing 10 most effective techniques with detailed explanations

MIT Sloan EdTech: Effective Prompts for AI: The Essentials – Academic framework for understanding prompt effectiveness and optimization

Nic Massam: Cinematic Aesthetic AI Portrait Prompts – Specialized prompts for visual AI applications and creative generation

OpenAI API Documentation: Prompt Engineering Guides – Official best practices for ChatGPT API and GPT model optimization

Langchain Documentation: Prompt Templates and Engineering – Technical framework for implementing sophisticated prompting in applications

Arshia Jahan

Digital Marketing and SEO professional, focused on content strategy & optimizing content, improving search rankings, and delivering results through smart, audience-focused strategies. As a Content Strategist and SEO professional, I believe that search engines don't buy products—people do. By blending technical SEO precision with a human-first content approach. I provide readers with the strategic blueprints needed to scale in a competitive digital world.